It is really frustrating to have a song or melody stuck in your mind and you cannot remember its title or artist name. That is the reason why these questions are seen frequently on the web:

- “Di Di De Da Di Di De Da Di” What that song called? URGENT!

- What is the name of that Saxophone song that’s like “DO DO DEE DO DO DO DOOOO DO DO DEE DO DO DO DEE DO DOO”?

To solve such problem, Query by Singing/Humming(QBSH) was proposed by researchers and developers to enable users to sing/hum the short piece of melody of a song to your phone and retrieve information about the song.

– I know it – Shazam!

No, not exactly. Shazam is an audio fingerprinting service that only can detect the played original version of song. Singing to Shazam won’t work, no matter how good a singer you are. Actually, a prominent prompt is shown when users open the app, especially in Asia, saying, “the singing voice could not be recognized here”. This might indicate that there must be a noticeable portion of user queries that are singing or humming.

Query by singing/humming technology, or melody indexing/searching, could be used in the following areas:

- In mobile search, it provides more convenient interface with the acoustic querying when a user can only remember part of the tune of the song;

- In a Karaoke application, QBSH enables users to hum a small part of a melody to search the wanted song in large song database;

- What is more, it could also generate a score to show the melody similarity between user & original artist’s singing. In other words, this score could do singing evaluation that rates acappella performances based on vocal similarity melodically.

Technology

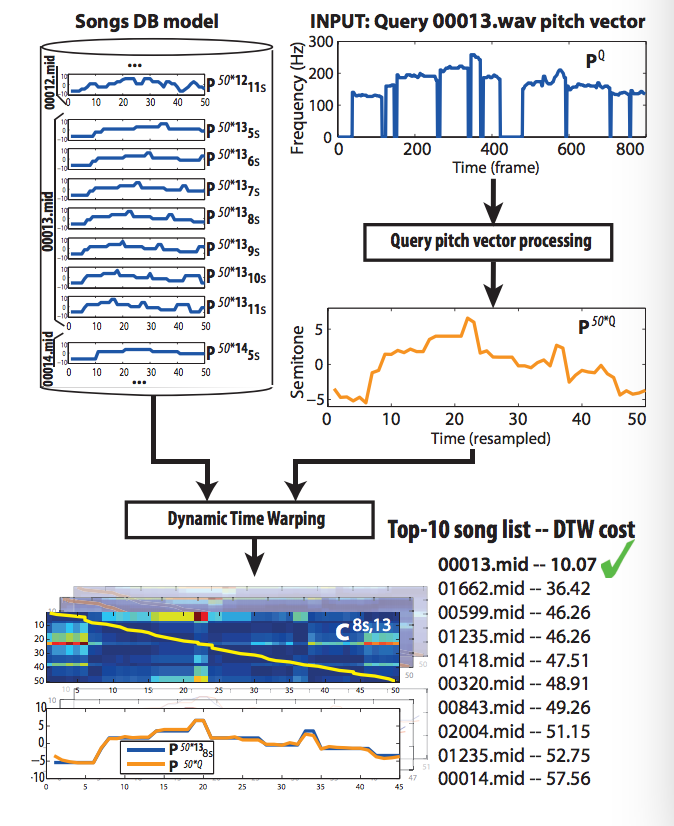

A typical QBSH system could be divided into 3 parts:

- Melody db processing module: Taking music score source(midi, singing voice or even polyphonic music files) to generate a song melody database;

- Acoustic processing module: Transcribing user’s humming/singing acoustic input queries to searchable melody features;

- Melody indexing & matching module: Computing the similarity between a database reference and a user’s query using certain measurements and produce the final ranked candidates sorted by similarities.

Under such structure,various kinds of QbSH systems have been researched.

Under such structure,various kinds of QbSH systems have been researched.

Wang et al. proposed the QBSH system by combining the earth mover’s distance (EMD) and dynamic time warping (DTW) distances based on the weighted SUM rule.

Ryynanen and Klapuri proposed the method of extracting the pitch vectors by using a fixed-size time window and matching them by using locality sensitive hashing (LSH) method.

Salamon and Rohrmeier proposed the two-stage retrieval method for QbSH system. At the first stage, the number of candidates is reduced by the indexing method using ngrams. Next, a more sophisticated matching method is applied with the remaining candidates based on local alignment with modified cost functions.

There are also several commercial applications for QBSH that have been released, such as SoundHound, and ACRCloud. Soundhound is a well-known mobile app and is designed for identifying music picked up in your surroundings or by singing/humming, while ACRCloud provides APIs/SDKs to enable 3rd party integration to utilize the query by humming feature.

Please note that, although the current state-of-the-art systems for QBSH have achieved a reasonable performance in real-world cases, there still remains a lot of room for the improvement on melody indexing & the matching method of the QBSH system, especially in a web-scale melody database.

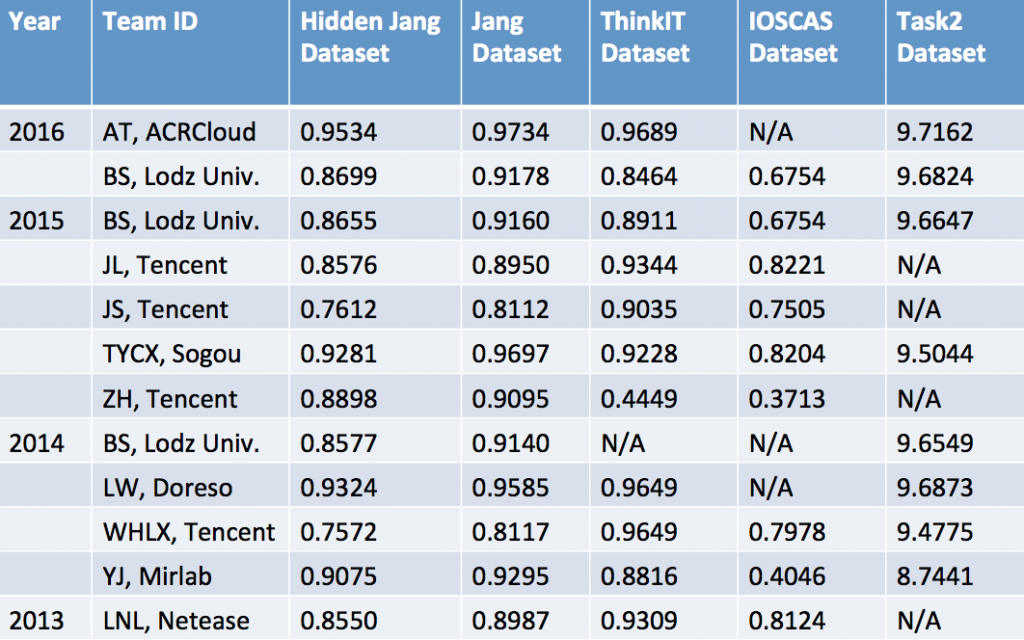

If you are interested in this researching topic, you may want to pay some attention or participate in QBSH Task, MIREX. The Music Information Retrieval Evaluation eXchange (MIREX) is an annual evaluation campaign for MIR algorithms, coupled to the ISMIR conference. Since it started in 2005, MIREX has fostered advancements both in specific areas of MIR and in the general understanding of how MIR systems and algorithms are to be evaluated.

The following table shows the last three years’s QBSH task result.

Use Cases

Xiaomi is one of the largest smartphone manufacturers in China. Xiaomi integrates ACRCloud’s music recognition & Query by Singing/Humming technologies in MIUI. Hundreds of millions of MIUI users will not only be able to identify music playing in their surrounding environment, but also be able to hum a tune and have the ACRCloud engine recognize it on MIUI’s music platform, Mi Music. Users will then be able to stream and/or download the respective song from Mi Music’s extensive music library.

ACRCloud provides the unique humming recognition of music on Mi Music. Users are able to hum/sing a tune to Mi Music and get the title and artist name of the songs in a few seconds.

Omusic is the third biggest music service in Taiwan. It integrates ACRCloud’s Query by Humming service on its mobile apps, to be the first and only music service which provides humming recognition in its market.

With this feature, millions of Omusic service users are now able to not only recognize music playing in their background environment but also be able to hum a tune and have the ACRCloud engine recognize the tune. Users will then be able to stream or download the respective song from Omusic’s extensive music library.